Artificial intelligence went mainstream in 2023. The watershed moment arrived at the end of the previous year, with the November 30 release of ChatGPT. Just two months later, the OpenAI system was reaching an estimated 100 million active users. According to analysts at investment bank UBS, the headline-grabbing chatbot had become the fastest-growing consumer app of all time.

Over the remaining course of 2023, the hype train went into overdrive. Suddenly, AI seemed to be everywhere. It was transforming our lives. It was taking our jobs. It was even threatening to cause an apocalypse.

In reality, however, the breakthroughs have largely emerged within a single portion of artificial intelligence: generative AI. The excitement sparked by ChatGPT’s text, GitHub Copilot’s code, and Stable Diffusion’s images is yet to spread across the field.

“While the use of GenAI might spur the adoption of other AI tools, we see few meaningful increases in organisations’ adoption of these technologies,” McKinsey concluded in its report on the state of AI in 2023.

“The percent of organisations adopting any AI tools has held steady since 2022, and adoption remains concentrated within a small number of business functions.”

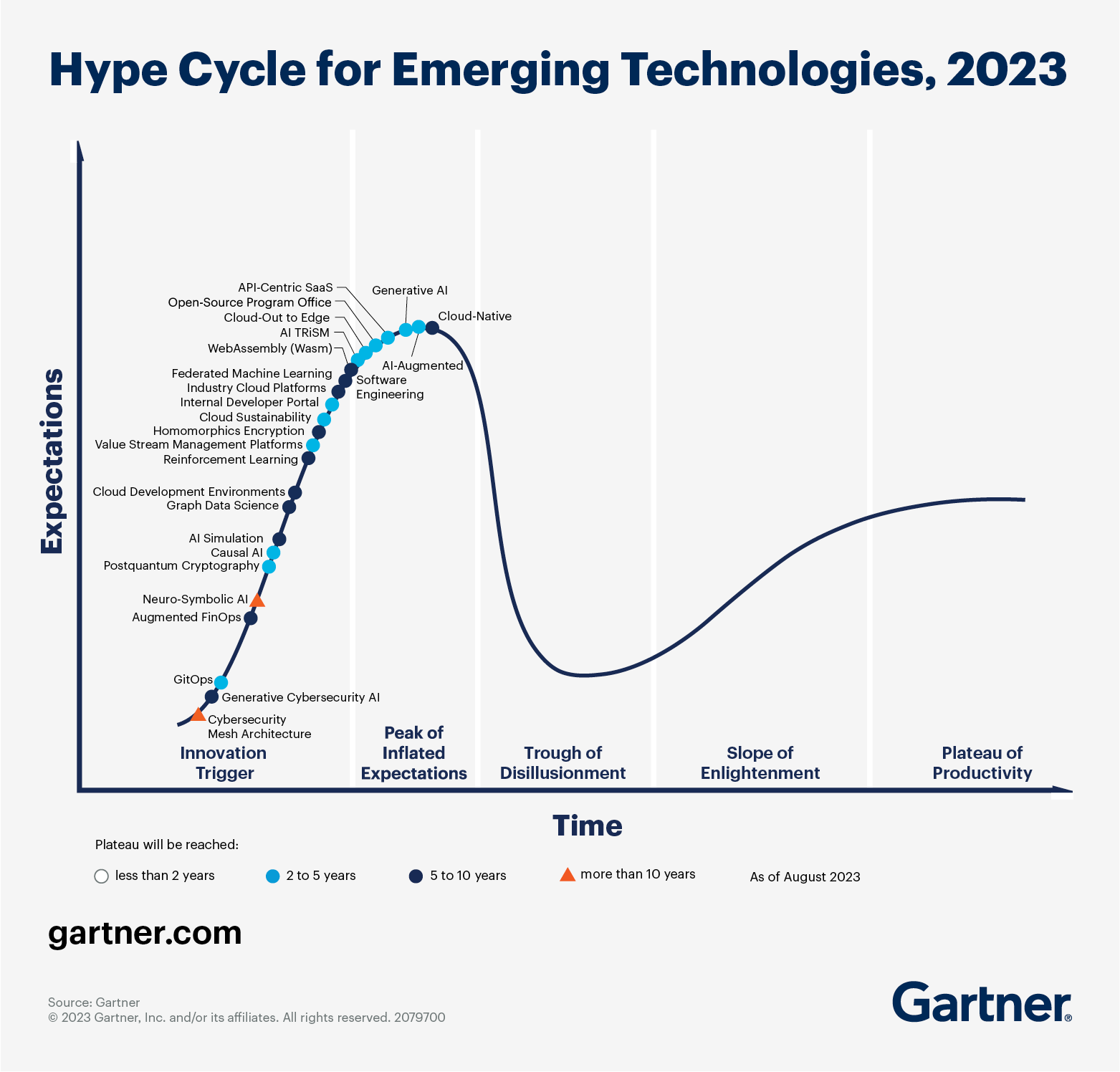

Generative AI also still has more to prove. In recent research from Infosys, the Indian IT giant found only 6% of European companies are producing business value with their GenAI use cases. In Gartner’s famous hype cycle for emerging technologies, the subsector has reached the “peak of inflated expectations.”

The next stage of the cycle for GenAI is the “trough of disillusionment.” In that phase, interest wanes as experiments and implements fail to deliver, while producers of the tech shake out or fail.

Gartner’s warning echoed across our conversations with European tech insiders. In 2024, they expect a cautious and pragmatic approach to AI adoption.

“Boardrooms need proof that these investments will increase the bottom line,” said Adi Andrei, the director of the UK’s Technosophics and a former senior data scientist at NASA. “A lot of money and effort has been poured into monetising ChatGPT and similar GenAI solutions, but the results are lacking.”

One critical shortcoming is the inaccuracies caused by AI hallucinations. While the remarkable text produced by large language models (LLMs) offers a veneer of reason, beneath the surface the systems merely calculate the probable order of different words. Those odds don’t always lead to correct results.

“Such superficial intelligence is not always valuable and reliable, and the industry is waking up to reality,” Andrei said.

Entering new spaces

Despite the challenges, GenAI is set to enter a growing range of industries in 2024.

According to McKinsey’s research, businesses that rely on knowledge work have the most to gain. The consulting firm expects tech companies to reap the biggest benefits, adding the equivalent of up to 9% of global industry revenue. Other sectors set to cash in are banking (up to 5%), pharmaceuticals and medical products (also up to 5%), and education (up to 4%).

“Market watchers find something else to look at.

Ali Chaudhry, the founder of the Generative AI and RL Community in London, predicts that the tech will also spread into manufacturing, engineering, automotive, aerospace, and energy industries.

“Unfortunately, given the ongoing political situation across the globe, we might as well witness growing investments in AI applications in the defence sector,” he added.

In many sectors, however, the uptake will be gradual. In the games industry, for instance, monetising AI remains challenging for most players. Paraag Amin, CFO of Slovakian startup SuperScale, a growth platform for games, doesn’t anticipate real revenues to emerge before 2025.

“Adopting AI and associated tools into widespread processes takes time,” Amin told TNW. “In this integration and adoption phase, monetization models will continue to evolve, and also compete with free or freemium offerings. This makes driving short-term revenue more difficult until these models stabilize and winners emerge.”

Future fears

There are also growing concerns about AI’s inroads into nefarious applications. With 1.5 billion people set to vote in national ballots next year, experts fear that deepfakes will turbocharge political disinformation.

Synthetic media also has the power to wreak havoc in boardrooms.

“Without sophisticated monitoring and detection tools, it’s almost impossible to detect this type of synthetic imagery,” said Andrew Newell, the chief scientific officer at biometrics firm iProov. “As such, we fully expect to see an AI-generated Zoom call lead to the first billion-dollar CEO fraud in 2024.”

Another pressing issue for 2024 is the scraping of web data to train AI systems.

Critics are now calling for curbs on the practice. Juras Juršėnas, the chief operating officer at web intelligence platform Oxylabs, warns that reining in data scraping will have mixed results.

“Unfortunately, restrictions on public web data collection might delay innovations in the AI field,” she said. “On the other hand, the web data collection industry has long lacked clear guidelines and answers regarding data ownership, privacy, and data aggregation at scale. So, we hope that case law will start clearing up those grey zones.”

Further legal clarity could emerge from regulation. Around the world, governments are taking diverging paths to control the tech. The EU’s AI Act applies a sweeping set of rules and a risk-based approach, while the US is following a more sector-specific model that aims to reduce red tape. In the UK, the interventions have thus far fallen somewhere between the two.

“What this means is that we’re going to see three distinct rule books develop across these three markets,” said Richard Bownes, principal for AI and ML at digital consultancy Kin + Carta. “2024 will see these rulebooks get tighter, which is going to catch out those companies who didn’t start building their data infrastructure that underpins AI use.”

The tip of the AI iceberg

Amid the increasing restrictions and fading hype, analysts expect GenAI to leave a positive legacy for the broader realm of artificial intelligence.

The experiences and technological advances will provide valuable insights, investments, and IT stacks for the sector. In 2024, other emerging techniques could take advantage of the GenAI boom.

Juršėnas highlights two particularly promising contenders. The first is federated learning, which enables the training of ML algorithms without direct access to private data. As a result, efficiencies, performance, algorithms, and privacy could all be enhanced.

The second is causal AI, which seeks to reduce bias and increase accuracy by equating correlation with causation. In a sense, the approach brings AI closer to the workings of the human mind. Questions are posed as “what ifs” and connections are probed between cause and effect.

“Federated machine learning and causal AI might help create a healthy competition in the AI field, which is currently dominated by only superficially intelligent generative systems,” Juršėnas said.

As for GenAI, Juršėnas expects the deployments to depend on the ability of providers to serve models as web-based APIs. That may not herald a revolution, but the results could still be powerful.

Indeed, the progress from hype to reality is where the true impact emerges. Bownes is bullish about the possibilities.

“AI and, in particular, generative AI enjoyed as much hype as any technology in the last 100 years,” he said. “It was inevitable that the results wouldn’t come as fast or be as impactful as to justify the level of excitement from even casual onlookers.

“What this means, though, is that as the market watchers find something else to look at, real use cases will emerge, the tech will develop, and excitement will begin anew. Much like the aftermath of an eruption, yes, the volcano is exciting, but the fertile land is what’s of actual use.”

Leave A Comment

You must be logged in to post a comment.